Starting Point

The Addison Rae deepfake situation is one of the most talked about examples of how this dangerous technology destroys real lives. Addison Rae is one of the most recognized social media personalities in the world. Furthermore, her experience with deepfake content highlights a growing crisis that affects thousands of public figures and ordinary people every single year.

In this complete article, you will find everything about the Addison Rae deepfake issue. This includes what deepfakes are, how they have impacted her life and career, the mental health toll they take on victims, the legal landscape around deepfake content, and what needs to change to protect people from this kind of harm. Moreover, understanding her story helps shine a light on a problem that the internet and lawmakers are only beginning to take seriously.

Who Is Addison Rae?

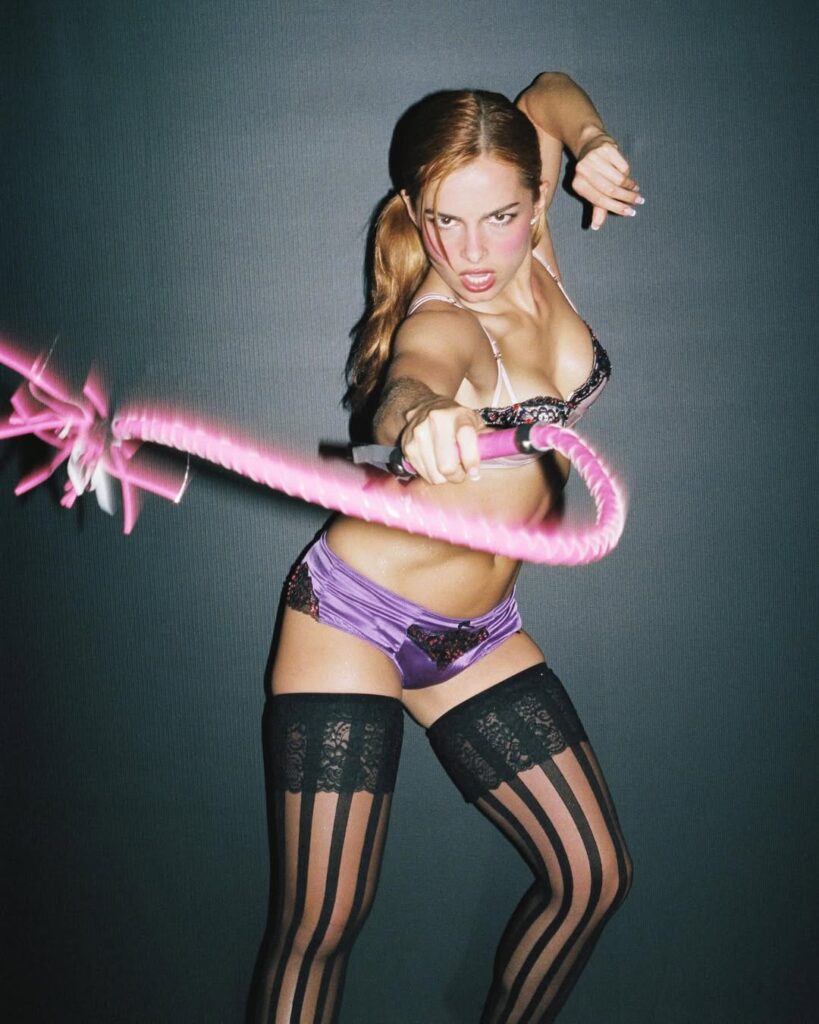

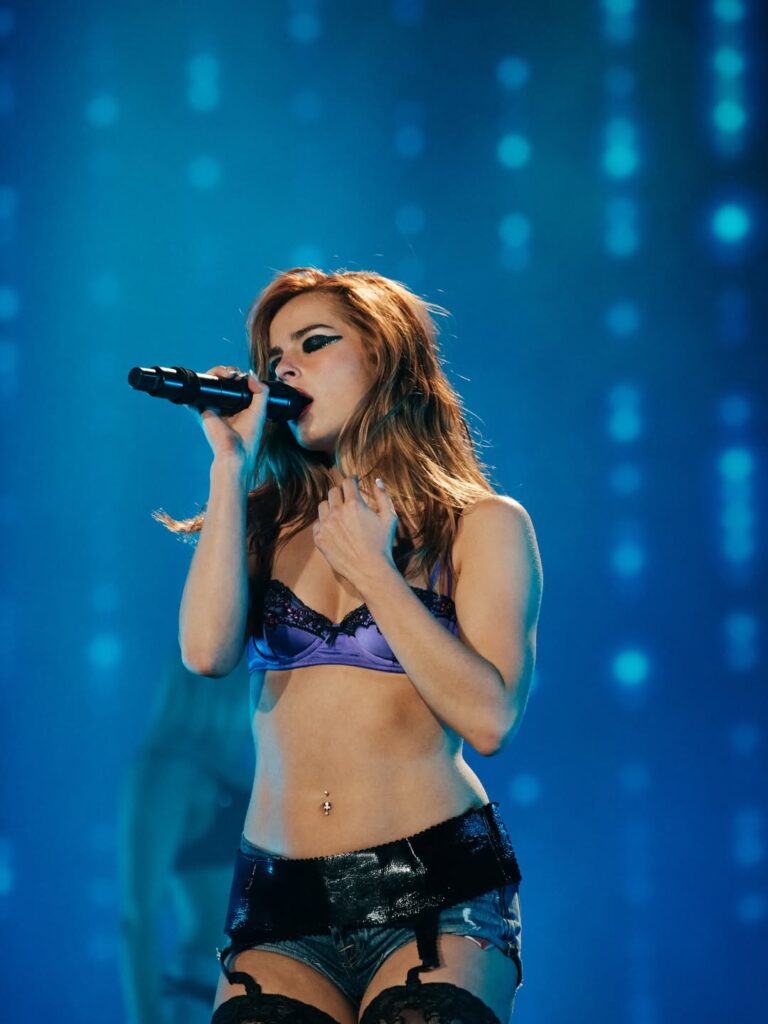

Before discussing the Addison Rae deepfake impact, it is important to understand who she is and what she has built. Addison Rae Easterling was born on October 6, 2000, in Lafayette, Louisiana. She rose to global fame through TikTok, where she became one of the platform’s most followed creators in its early years.

Her content started simply. She posted dance videos and lifestyle content that connected with millions of young viewers. As a result, her following exploded rapidly and she became one of the defining faces of the TikTok generation. Furthermore, her success on TikTok opened doors in music, acting, and brand partnerships that transformed her from a content creator into a full entertainment industry figure.

She starred in the Netflix film He’s All That in 2021. She released music and built brand deals with major companies. Moreover, she has consistently worked to expand her career beyond social media into mainstream entertainment. In other words, she built something real, meaningful, and hard-earned through years of consistent creative work.

That makes the Addison Rae deepfake situation even more damaging. Her reputation, her brand, and her public image are her livelihood. Deepfake content attacks all three simultaneously.

Addison Rae Personal Attributes Table

| Attribute | Details |

|---|---|

| Full Name | Addison Rae Easterling |

| Date of Birth | October 6, 2000 |

| Age | 24 years old as of 2025 |

| Birthplace | Lafayette, Louisiana, USA |

| Nationality | American |

| Profession | Content Creator, Actress, Singer |

| Platform | TikTok, Instagram, YouTube |

| TikTok Followers | Over 88 million |

| Known For | Dance videos, He’s All That, music career |

| Brand Partnerships | Multiple major brand collaborations |

Addison Rae Career Attributes Table

| Attribute | Details |

|---|---|

| TikTok Start | 2019 |

| Breakthrough Year | 2020 |

| Acting Debut | He’s All That on Netflix, 2021 |

| Music Release | Obsessed, released 2021 |

| Brand Deals | American Eagle, Reebok, L’Oreal and others |

| Estimated Net Worth | Approximately 15 million dollars |

| Social Media Reach | Hundreds of millions across all platforms |

| Industry Recognition | Among highest earning TikTok creators globally |

Deepfake Technology Attributes Table

| Attribute | Details |

|---|---|

| What Is a Deepfake | AI-generated fake video or image of a real person |

| Technology Used | Deep learning and generative AI models |

| Primary Harm | Non-consensual sexual content, reputation damage |

| Who Is Most Targeted | Women, celebrities, public figures |

| How Fast Content Spreads | Viral within hours on social platforms |

| Detection Difficulty | Increasingly hard to detect with naked eye |

| Legal Status in US | Patchwork of state laws, no federal law yet |

| Reported Cases Globally | Hundreds of thousands of victims annually |

Legal and Support Attributes Table

| Attribute | Details |

|---|---|

| US Federal Law | No comprehensive federal deepfake law as of 2025 |

| State Laws | Over 20 states have passed deepfake related legislation |

| Key States with Laws | California, Texas, Virginia, Georgia among others |

| Platform Policies | Most major platforms prohibit deepfake content |

| Removal Process | Victims must report content for manual review |

| Support Organizations | Cyber Civil Rights Initiative, Stop NCII |

| Legal Remedies | Civil lawsuits, platform takedowns, criminal charges in some states |

| Average Removal Time | Can take days to weeks despite urgent reports |

What Is a Deepfake and Why Is It So Harmful?

To fully understand the Addison Rae deepfake impact, you first need to understand what a deepfake actually is. A deepfake is a piece of artificial intelligence-generated content that places a real person’s face or body into a video or image they never actually appeared in. The technology uses deep learning models trained on large amounts of real photos and videos of the target person.

The results can be shockingly convincing. Early deepfakes were easy to spot because of blurring, unnatural blinking, or distorted edges. However, the technology has improved dramatically in recent years. Today, even trained experts sometimes struggle to identify deepfake content without specialized detection tools.

The Primary Target of Deepfake Content

The vast majority of deepfake content produced online is non-consensual sexual content featuring women. Research has consistently found that over 90 percent of deepfake videos online fall into this category. Furthermore, celebrities and public figures with large amounts of publicly available photos and videos are among the most frequently targeted individuals.

Addison Rae fits this profile exactly. She is young, female, and highly visible online. Millions of photos and videos of her exist across the internet. As a result, she has become a target for this type of harmful content in a way that is directly connected to her professional success and public visibility.

Why Deepfakes Are More Damaging Than Other Harassment

Traditional online harassment is harmful enough. However, deepfakes add a uniquely devastating dimension. The content appears real. Viewers who see it may genuinely believe it is authentic. Furthermore, once deepfake content is shared online, it spreads rapidly and becomes almost impossible to fully remove.

The victim must then spend enormous energy proving the content is fake rather than the creator being required to prove it is real. Moreover, the emotional and psychological damage happens immediately upon discovery. The legal and platform removal processes take much longer. In other words, the harm arrives instantly while the remedies arrive slowly if they arrive at all.

How the Addison Rae Deepfake Content Has Impacted Her Life

The Addison Rae deepfake situation has created real and serious consequences across multiple areas of her life. Understanding these impacts helps illustrate why deepfake content is not a minor internet problem. It is a genuine form of abuse that causes lasting damage.

Impact on Mental Health

Being the subject of non-consensual deepfake content is a deeply violating experience. Victims consistently describe feelings of powerlessness, anxiety, and a profound sense of personal violation. Furthermore, knowing that millions of people may have seen fabricated content featuring your face and body creates a kind of public humiliation that is extremely difficult to process.

For someone like Addison Rae, whose entire career is built on her public image and personal brand, the psychological impact is amplified. Every public appearance, every new post, and every interview becomes shadowed by awareness that harmful fake content about her exists and continues to circulate online.

Mental health professionals who work with deepfake victims describe a consistent pattern of symptoms. These include severe anxiety, difficulty trusting online spaces, withdrawal from public engagement, and in serious cases, symptoms consistent with post-traumatic stress. Moreover, young victims who grew up online and built their identities around digital spaces feel the loss of safety in those spaces particularly acutely.

Impact on Professional Reputation

The professional impact of the Addison Rae deepfake situation extends beyond personal distress. Brand partners and business collaborators conduct due diligence before entering partnerships. When harmful fake content about a creator circulates widely, it creates complications in those professional relationships even when everyone involved knows the content is fabricated.

Furthermore, advertisers and corporate partners are risk-averse by nature. Some may distance themselves from a creator associated with controversial content even when that creator is entirely a victim rather than a participant. In other words, the person being harmed can end up paying a professional price for something done to them without their knowledge or consent.

Impact on Public Perception

Public perception is another area where deepfake content causes real damage. Not every viewer who encounters deepfake content reads the context or disclaimer. Many people share content without verifying its authenticity. As a result, a significant portion of viewers may form incorrect beliefs about a real person based entirely on fabricated material.

Correcting false public perception is extraordinarily difficult. Retractions and clarifications rarely travel as far or as fast as the original false content. Moreover, search results and social media algorithms can continue surfacing the fake content long after its removal has been requested and partially processed. As a result, the reputational damage from deepfakes can persist for months or even years.

The Psychological Toll on Deepfake Victims

The Addison Rae deepfake experience is unfortunately not unique. Thousands of women face similar situations every year. Research into the psychological impact on deepfake victims reveals a consistent and deeply concerning pattern of harm.

Loss of Control and Autonomy

One of the most psychologically damaging aspects of deepfake victimization is the complete loss of control over one’s own image. Every person has a fundamental expectation of control over how their body and face are represented publicly. Deepfakes strip that control away entirely.

Victims describe this experience as deeply dehumanizing. Furthermore, the knowledge that technology can place your face into any context imaginable, without your consent and with increasing realism, creates a persistent sense of vulnerability that does not easily go away. In other words, discovering that a deepfake of you exists changes how you relate to your own public presence permanently.

Online Engagement Changes

Many deepfake victims significantly change their online behavior after discovering fabricated content about themselves. Some reduce the frequency of posting photos or videos. Others make accounts private or restrict who can view their content. Furthermore, some step back from public platforms entirely for extended periods to protect their mental health.

For creators like Addison Rae whose careers depend on consistent public engagement, these behavioral changes carry direct financial consequences. Reducing content output affects algorithm performance, follower engagement, and brand partnership opportunities. As a result, the financial harm from deepfakes compounds the emotional and reputational damage in ways that are rarely discussed publicly.

Relationships and Trust

Deepfake victimization also affects personal relationships and the ability to trust others. Victims often become hyperaware of how their image might be used or misused. Furthermore, they may develop anxiety about meeting new people who may have encountered fabricated content. The boundary between public and private self feels violated in a way that is hard to explain to people who have not experienced it.

Moreover, friends and family members of deepfake victims are also affected. They must navigate their own feelings about the situation while supporting someone they love through a unique and deeply unsettling form of harm.

The Legal Landscape Around Deepfakes in 2025

The Addison Rae deepfake situation exists within a legal landscape that is still catching up to the technology. As of 2025, the United States does not have a comprehensive federal law specifically addressing non-consensual deepfake content. This legal gap leaves victims with limited and inconsistent options depending on where they live.

State Level Progress

More than 20 states have passed legislation addressing deepfake content in various forms. California, Texas, Virginia, and Georgia are among the states that have moved furthest in creating legal protections for victims. Furthermore, several states have specific laws targeting non-consensual deepfake pornography with criminal penalties for creators and distributors.

However, the patchwork nature of state laws creates significant enforcement problems. Content can be created in one state, hosted on servers in another, and viewed globally. Jurisdiction questions complicate every legal case. Moreover, perpetrators who create deepfake content often hide behind anonymity tools that make identification and prosecution extremely difficult.

Platform Responsibility

Major social media and content platforms have policies prohibiting non-consensual deepfake content. However, the gap between policy and enforcement remains significant. Platforms rely primarily on user reports to identify and remove violating content. Furthermore, the volume of content uploaded every minute makes proactive detection enormously challenging even with AI-assisted moderation tools.

Victims must often submit multiple reports before content is removed. In addition, removal from one platform does not prevent the content from being reposted on others. As a result, the process of cleaning up deepfake content is ongoing, exhausting, and never fully complete.

What Needs to Change

Advocates and legal experts consistently call for several specific changes to better protect deepfake victims. A federal law criminalizing non-consensual deepfake content would create consistent protections across all states. Furthermore, platforms need mandatory rapid response protocols for deepfake reports rather than treating them the same as ordinary content violations.

Technology companies that build AI image generation tools also bear responsibility for implementing safeguards that prevent their technology from being used to create deepfake content of identifiable real people. Moreover, digital literacy education needs to be built into school curriculums so that young people understand both how to spot deepfakes and why creating them causes genuine harm.

How Celebrities and Public Figures Are Fighting Back

Addison Rae is not alone in facing deepfake content. Many celebrities and public figures have spoken out about their own experiences and taken steps to fight back against this form of digital abuse.

Speaking Out Publicly

One of the most powerful tools available to victims is speaking openly about their experience. When public figures share their stories, it accomplishes several things simultaneously. It humanizes the harm for audiences who might otherwise see deepfakes as a minor or abstract issue. Furthermore, it encourages other victims to come forward and seek support rather than suffering in silence.

Several high-profile celebrities have used their platforms to demand stronger legal protections and platform accountability. Their voices carry weight in public discourse and have contributed to legislative progress at both state and federal levels. Moreover, public awareness campaigns built around real stories of harm are far more effective at changing behavior and policy than abstract statistics alone.

Legal Action

Some victims have pursued legal action against deepfake creators where identification is possible. Civil lawsuits seeking damages for defamation, intentional infliction of emotional distress, and violations of right of publicity have been filed in multiple jurisdictions. Furthermore, in states with specific criminal deepfake laws, some perpetrators have faced criminal charges and prosecution.

Legal action serves both as direct remedy and as deterrent. When creators of deepfake content face real consequences, others considering similar actions may think twice. Moreover, successful lawsuits establish legal precedents that strengthen future cases and clarify the legal standards applicable to deepfake content.

Support Networks and Organizations

Several organizations exist specifically to support victims of non-consensual deepfake content. The Cyber Civil Rights Initiative provides resources, legal referrals, and emotional support for victims. Stop NCII is a platform that allows victims to create digital fingerprints of harmful content to help platforms detect and remove it more efficiently.

Furthermore, online communities where victims can share experiences and support each other have become important sources of validation and practical advice. In other words, no victim of deepfake content needs to navigate the experience entirely alone. Support structures exist and are growing as awareness of the issue increases.

What Young People Need to Know About Deepfakes

The Addison Rae deepfake situation is especially relevant to young audiences because Addison Rae’s primary fanbase consists largely of teenagers and young adults. Understanding what deepfakes are, why they cause harm, and how to respond if you encounter them is essential digital literacy for this generation.

Recognizing Deepfake Content

Learning to identify deepfake content is an increasingly important skill. Common signs include unnatural blinking patterns, slight blurring around the edges of the face, inconsistent lighting between the face and the background, and audio that does not quite match lip movements. Furthermore, context clues matter. If content seems completely out of character for the person it depicts, healthy skepticism is appropriate.

However, as the technology improves, visual detection becomes less reliable. As a result, the most important habit is to question the source of any shocking or sensitive content before sharing or believing it. In other words, where did this content come from, and who benefits from it being shared?

What to Do If You Encounter Deepfake Content

If you encounter content you believe is a deepfake of a real person, the most helpful action is to report it to the platform immediately without sharing it further. Sharing deepfake content, even with the intention of exposing it, increases its reach and compounds the harm to the victim. Furthermore, reporting it directly helps platforms identify and remove it faster.

Moreover, if you know the person depicted, reaching out with care and directing them to support resources is a genuinely helpful response. Victims of deepfake content often feel isolated and are not always aware that resources and legal options exist.

10 Frequently Asked Questions About Addison Rae Deepfake

What is the Addison Rae deepfake situation?

The Addison Rae deepfake situation refers to the creation and circulation of AI-generated fake content featuring Addison Rae’s likeness without her consent. Furthermore, this type of content causes serious harm to her reputation, mental health, and professional life. It is part of a wider crisis of non-consensual deepfake content targeting women and public figures online.

How has the Addison Rae deepfake affected her career?

The Addison Rae deepfake has created complications for her professional reputation and brand partnerships. Furthermore, the psychological toll of being a deepfake victim can affect a creator’s willingness and ability to engage publicly, which has direct consequences for a career built on consistent online presence and audience engagement.

Is creating a deepfake of someone illegal?

Laws vary by location. As of 2025, over 20 US states have laws specifically addressing non-consensual deepfake content, particularly sexual content. However, there is no comprehensive federal law in the United States yet. Furthermore, enforcement remains difficult because of anonymity tools and jurisdictional complications in online cases.

What mental health impact do deepfakes have on victims?

Deepfake victims commonly experience severe anxiety, feelings of powerlessness, loss of trust in online spaces, and in serious cases, symptoms consistent with post-traumatic stress. Moreover, the loss of control over one’s own image is deeply dehumanizing and creates lasting psychological effects that extend well beyond the initial discovery of the content.

Can deepfake content ever be fully removed from the internet?

Full removal of deepfake content from the internet is extremely difficult to achieve. Content removed from one platform can be reposted on others. Furthermore, copies may exist on private servers or devices that are completely outside the reach of platform moderation. As a result, victims must engage in an ongoing process of reporting and removal that rarely achieves complete elimination.

What platforms have policies against deepfake content?

Most major social media platforms including TikTok, Instagram, YouTube, and X have policies prohibiting non-consensual deepfake content. However, the gap between policy and enforcement is significant. Furthermore, platforms rely primarily on user reports rather than proactive detection, which means harmful content can remain visible for extended periods before removal.

What should you do if you see a deepfake of someone online?

If you encounter deepfake content of a real person online, you should report it to the platform immediately and avoid sharing it further. Sharing the content increases its reach and compounds the harm to the victim. Furthermore, if you know the victim personally, directing them to support organizations like the Cyber Civil Rights Initiative is a genuinely helpful action.

How does deepfake technology work?

Deepfake technology uses artificial intelligence and deep learning models trained on large amounts of real photos and videos of a target person. The AI learns to map the target’s facial features onto other bodies or into different scenarios. Furthermore, the technology has improved dramatically in recent years, making modern deepfakes significantly more convincing and harder to detect than earlier versions.

Why are celebrities like Addison Rae particularly vulnerable to deepfakes?

Celebrities are particularly vulnerable to deepfake content because large amounts of publicly available photos and videos of them exist online. This gives deepfake AI models rich training data to work from. Furthermore, celebrities have high public profiles that make deepfake content about them more likely to spread widely and cause greater reputational damage than content targeting private individuals.

What changes are needed to better protect deepfake victims?

Advocates and legal experts call for a comprehensive federal deepfake law in the United States, mandatory rapid response protocols from platforms for deepfake reports, and greater responsibility from AI technology companies to prevent their tools from being used to create harmful content. Furthermore, digital literacy education about deepfakes in schools is essential so young people understand both the harm caused and how to recognize fabricated content.

Conclusion: The Real Cost of the Addison Rae Deepfake Crisis

The Addison Rae deepfake situation is not just a celebrity story. It is a window into a serious and growing form of digital abuse that affects thousands of people every year. Addison Rae built her career through talent, hard work, and a genuine connection with her audience. The deepfake content created about her without her consent is an attack on all of that.

The harm is real across every dimension. Mental health suffers. Professional reputation faces complications. Personal relationships feel the strain. Furthermore, the legal and technical remedies available to victims remain inadequate relative to the speed and scale at which harmful content can spread.

Understanding the Addison Rae deepfake situation in its full context means recognizing that this is not entertainment or a trivial internet controversy. It is abuse. It causes genuine suffering. Moreover, it represents a failure of the platforms, the legal system, and the cultural norms around digital consent that society needs to urgently address.

Progress is happening. State laws are being passed. Platforms are improving their policies. Organizations supporting victims are growing. However, the technology is evolving faster than the protections. As a result, public figures and ordinary people alike remain vulnerable in ways that demand faster and stronger responses from lawmakers, technology companies, and society as a whole.

Addison Rae deserves better. Every victim of deepfake content deserves better. Furthermore, every young person growing up online deserves to do so in a digital environment where their image cannot be stolen and weaponized against them without consequence. That is the standard we should be demanding and the future we should be building together.